By Mario Chow & Figo @IOSG

Introduction

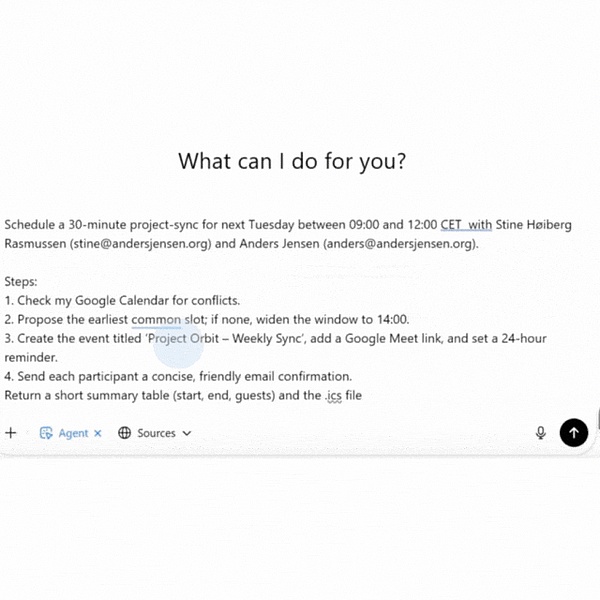

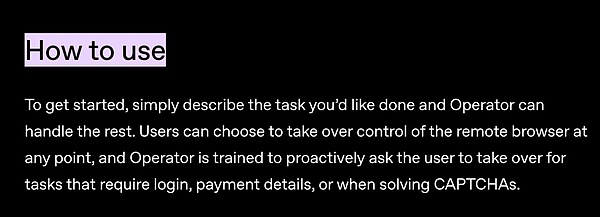

Over the past 12 months, the web browser’s relationship with automation has changed dramatically. Nearly every major tech company is scrambling to build its own browser agent. This trend has become increasingly clear since the end of 2024: OpenAI launched Agent Mode in January, Anthropic released a "Computer Usage" feature for the Claude model, Google DeepMind launched Project Mariner, Opera announced its agent-based browser Neon, and Perplexity AI launched the Comet browser. The signal is clear: the future of AI lies in agents that can autonomously navigate the web. This trend isn't just about adding smarter chatbots to browsers; it's a fundamental shift in how machines interact with digital environments. Browser agents are AI systems that can "see" web pages and take actions: clicking links, filling out forms, scrolling, entering text—just like human users. This model promises to unlock enormous productivity and economic value by automating tasks that currently require manual effort or are too complex for traditional scripts. ▲ GIF demonstration: An AI browser agent in action: following instructions, navigating to the target dataset page, automatically taking screenshots, and extracting the required data. Who will win the AI browser war? Nearly every major tech company (and some startups) is developing their own browser AI agent solutions. Here are a few of the most representative projects: OpenAI – Agent Mode OpenAI’s Agent Mode (formerly known as Operator, launched in January 2025) is an AI agent with its own browser. Operator can handle a variety of repetitive online tasks: filling out web forms, ordering groceries, scheduling meetings, etc. – all through the standard web interface that humans use.

▲ The AI agent schedules meetings like a professional assistant: checks the calendar, finds available time slots, creates events, sends confirmations, and generates .ics files for you.

Anthropic – Claude’s “Computer Use”:

At the end of 2024, Anthropic introduced a new “Computer Use” feature for Claude 3.5, giving it the ability to operate computers and browsers like humans. Claude can look at the screen, move the cursor, click buttons, and enter text. This is the first large-scale model agent tool of its kind to enter public beta, allowing developers to enable Claude to automatically navigate websites and applications. Anthropic is positioning it as an experimental feature, with the primary goal of automating multi-step workflows on the web. Perplexity – Comet AI startup Perplexity (known for its question-answering engine) launched the Comet browser in mid-2025 as an AI-powered alternative to Chrome. At its core, Comet is a conversational AI search engine built into the address bar (omnibox), providing instant questions and answers and summaries instead of traditional search links. In addition, Comet also includes the Comet Assistant, a sidebar-resident agent that automates routine tasks across websites. For example, it can summarize your open emails, schedule meetings, manage browser tabs, or browse and crawl the web on your behalf. Comet aims to seamlessly integrate browsing with AI assistants by enabling agents to perceive the current webpage's content through a sidebar interface. Real-World Application Scenarios of Browser Agents Previously, we reviewed how major tech companies (OpenAI, Anthropic, Perplexity, and others) have incorporated functionality into browser agents through various product forms. To better understand their value, let's examine real-world scenarios where these capabilities are being applied in daily life and enterprise workflows.

Daily Web Automation

E-commerce and Personal Shopping

A very practical scenario is to delegate shopping and booking tasks to agents. The agent can automatically fill your online shopping cart and place an order based on a fixed list, or it can search for the lowest price across multiple retailers and complete the checkout process on your behalf. For travel, you can ask AI to perform tasks like: "Book me a flight to Tokyo next month for under $800 and a hotel with free Wi-Fi." The agent will handle the entire process: searching for flights, comparing options, filling out passenger information, and completing the hotel reservation, all through the airline and hotel websites. This level of automation goes far beyond existing travel bots: it goes beyond just making recommendations and directly executes the purchase. Improve Office Productivity Agents can automate many repetitive tasks that people perform in their browsers. For example, they can organize emails and extract to-do items, or check multiple calendars for availability and automatically schedule meetings. Perplexity's Comet assistant can already summarize your inbox contents and add appointments to your calendar through a web interface. With your permission, agents can also log into SaaS tools to generate regular reports, update spreadsheets, or submit forms. Imagine an HR agent automatically logging into various job posting websites, or a sales agent updating lead data in a CRM system. These mundane tasks would otherwise consume significant employee time, but AI can now automate them by automating web forms and page operations. Beyond single tasks, agents can orchestrate entire workflows across multiple networked systems. Each of these steps requires different web interfaces, which is exactly what browser agents excel at. Agents can log into various dashboards for troubleshooting and even orchestrate processes, such as onboarding new employees (creating accounts across multiple SaaS websites). Essentially, any multi-step operation that currently requires multiple website clicks could be performed by a proxy. Current Challenges and Limitations Despite their enormous potential, today's browser proxies are far from perfect. Current implementations reveal several long-standing technical and infrastructure challenges: Architectural Mismatches The modern web was designed for human-operated browsers and has evolved over time to actively resist automation. Data is often buried in HTML/CSS optimized for visual presentation, limited by interaction gestures (hover, swipe), or accessible only through undocumented APIs. On top of this, anti-scraping and anti-fraud systems artificially add additional barriers. These tools combine IP reputation, browser fingerprinting, JavaScript challenge responses, and behavioral analysis (e.g., mouse movement randomness, typing rhythm, dwell time). Paradoxically, the more "perfect" and efficient an AI agent appears—for example, filling out forms instantly and without error—the easier it is to identify as malicious automation. This can lead to hard failures: for example, an OpenAI or Google agent might successfully complete all pre-checkout steps only to be stopped by a CAPTCHA or secondary security filter. The combination of a human-optimized interface and a bot-unfriendly defense layer forces agents to adopt fragile "human-mimicking" strategies. This approach is highly prone to failure and has a low success rate (less than one-third of transactions are completed without human intervention).

Trust and security concerns

Giving agents full control often requires access to sensitive information: login credentials, cookies, two-factor authentication tokens, and even payment information. This raises understandable concerns for both users and businesses:

Based on these risks, current systems generally take a cautious approach:

Google’s Mariner doesn’t ask the user to enter credit card information or agree to terms of service, but instead hands it back to the user.

OpenAI’s Operator prompts the user to take over a login or CAPTCHA challenge.

The result: frequent pauses and handoffs between AI and humans, undermining the experience of seamless automation.

Despite these obstacles, progress is proceeding rapidly. Companies like OpenAI, Google, and Anthropic are learning from their failures with each iteration. As demand grows, a "co-evolution" is likely to occur: websites will become more proxy-friendly in favorable scenarios, while proxies will continue to improve their ability to mimic human behavior to circumvent existing barriers. Approaches and Opportunities Current browser proxies face two distinct realities: on the one hand, the hostile environment of Web2, where anti-scraping and security defenses are ubiquitous; on the other, the open environment of Web3, where automation is often encouraged. This difference determines the direction of various solutions. The following solutions can be roughly divided into two categories: those that help proxies circumvent the hostile environment of Web2, and those that are native to Web3. While the challenges facing browser proxies remain significant, new projects are emerging that attempt to address them directly. The cryptocurrency and decentralized finance (DeFi) ecosystem is becoming a natural testing ground because it is open, programmable, and less hostile to automation. Open APIs, smart contracts, and on-chain transparency remove many of the friction points common in the Web2 world. Below are four types of solutions, each addressing one or more of the current core limitations: Native proxy browsers for on-chain operations These browsers are designed from the ground up to be driven by autonomous proxies and are deeply integrated with blockchain protocols. Unlike traditional Chrome browsers, which rely on Selenium, Playwright, or wallet plugins to automate on-chain operations, native proxy browsers directly provide APIs and trusted execution paths for proxy calls. In decentralized finance, transaction validity relies on cryptographic signatures, not on whether the user is "human-like." Therefore, in an on-chain environment, proxies can bypass CAPTCHAs, fraud detection scores, and device fingerprinting checks common in the Web2 world. However, if these browsers are pointed to Web2 websites like Amazon, they cannot bypass related defenses; in that scenario, normal anti-bot measures will still be triggered. The value of a proxy browser isn’t that it magically grants access to all websites, but rather that it offers: Native blockchain integration: Built-in wallet and signature support, eliminating the need for MetaMask popups or parsing the DOM on a dApp frontend. Automation-first design: Providing stable, high-level commands that map directly to protocol operations.

Security Model: Fine-grained permission control and sandboxing ensure the security of private keys during automation.

Performance Optimization: Ability to execute multiple on-chain calls in parallel without browser rendering or UI delays.

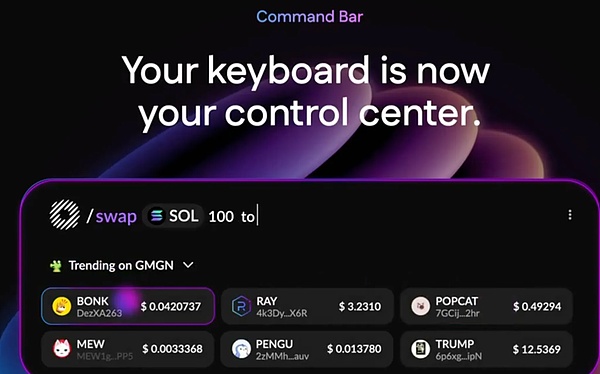

Case Study: Donut

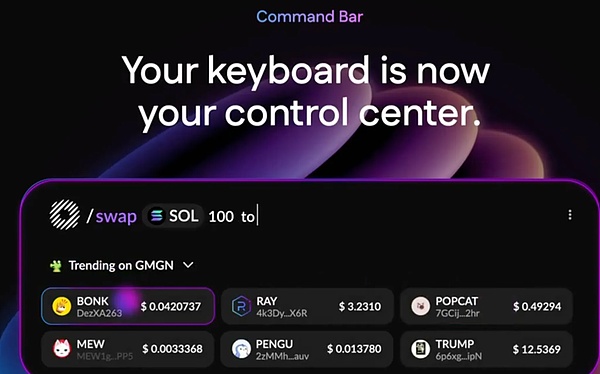

Donut integrates blockchain data and operations as first-class citizens. Users (or their agents) can hover to view real-time risk indicators for tokens or directly enter natural language commands like "/swap 100 USDC to SOL." By bypassing the adversarial friction points of Web2, Donut enables agents to operate at full speed in DeFi, improving liquidity, arbitrage, and market efficiency. Verifiable and Trusted Proxy Execution Allowing agents to gain sensitive permissions is risky. Solutions like these use Trusted Execution Environments (TEEs) or Zero-Knowledge Proofs (ZKPs) to cryptographically confirm the expected behavior of an agent before execution, allowing users and counterparties to verify agent actions without exposing private keys or credentials. Case Study: Phala Network Phala uses TEEs (such as Intel SGX) to isolate and protect the execution environment, preventing Phala operators or attackers from snooping on or tampering with proxy logic and data. A TEE acts like a hardware-backed "safe room," ensuring confidentiality (invisible to the outside world) and integrity (unable to modify from the outside). For a browser proxy, this means it can log in, hold session tokens, or process payment information, without ever leaving the safe room. Even if the user's machine, operating system, or network is compromised, this sensitive data cannot be leaked. This directly alleviates one of the biggest obstacles to proxy adoption: the trust issue with sensitive credentials and operations. Modern anti-bot detection systems not only check whether requests are "too fast" or "automated," but also combine IP reputation, browser fingerprinting, JavaScript challenge responses, and behavioral analysis (such as cursor movement, typing rhythm, and session history). Proxies originating from data center IPs or completely repeatable browsing environments are easily identified. To address this issue, these networks move away from crawling web pages optimized for humans and instead directly collect and provide machine-readable data, or proxy traffic through real human browsing environments. This approach circumvents the vulnerabilities of traditional crawlers in parsing and anti-crawling, providing cleaner and more reliable input for agents. By proxying traffic to these real-world sessions, the distributed network allows AI agents to access web content like humans, without immediately triggering blockage. # Example Grass: Decentralized Data/DePIN Network, where users share unused residential broadband, provides agent-friendly, geographically diverse access channels for public web data collection and model training. WootzApp: An open-source mobile browser that supports cryptocurrency payments, features a backend proxy, and zero-knowledge identity; it gamifies AI/data tasks for consumers. Sixpence: A distributed browser network that routes traffic for AI proxies through the browsing experience of global contributors. However, this isn't a complete solution. Behavioral detection (mouse/scroll tracking), account-level restrictions (KYC, account age), and fingerprint consistency checks can still trigger a block. Therefore, the distributed web is best viewed as a fundamental privacy layer that must be combined with human-mimicking execution strategies to achieve maximum effectiveness. Agent-Oriented Web Standards (Foresight) Currently, a growing number of technical communities and organizations are exploring the question: If future web users are not only human but also automated agents, how should websites interact with them securely and compliantly? This has driven discussions on emerging standards and mechanisms, with the goal of enabling websites to explicitly indicate "I allow trusted agents to access" and provide a secure channel to complete interactions, rather than defaulting to blocking agents as "bot attacks" as is done today.

“Agent Allowed” tag: Just like the robots.txt that search engines comply with, in the future web pages may add a tag to the code to tell browser agents "this is safe to access." For example, if you use an agent to book a flight, the website will not pop up a bunch of verification codes (CAPTCHA), but will directly provide an authenticated interface.

API Gateway for Certified Agents: The website can open a dedicated entrance for verified agents, like a "fast lane." Agents do not need to simulate human clicks and input, but instead use a more stable API path to complete orders, payments, or data queries. W3C Discussion: The World Wide Web Consortium (W3C) is already exploring how to develop standardized channels for "managed automation." This means that in the future, we may have a set of globally accepted rules that allow trusted agents to be identified and accepted by websites while maintaining security and accountability. While these explorations are still in their early stages, once implemented, they could significantly improve the relationship between humans, agents, and websites. Imagine: no longer having to desperately mimic human mouse movements to "fool" risk control, but instead completing tasks openly through an "officially approved" channel. Crypto-native infrastructure may be the first to take off in this direction. Because on-chain applications inherently rely on open APIs and smart contracts, they are automation-friendly. In contrast, traditional Web2 platforms, particularly those relying on advertising or anti-fraud systems, may continue to play defense. However, as users and businesses gradually embrace the efficiency gains offered by automation, these standardization efforts are likely to become a key catalyst in driving the entire internet towards an agent-first architecture. Conclusion

Browser agents are evolving from simple conversational tools to autonomous systems capable of completing complex online workflows. This shift reflects a broader trend: embedding automation directly into the core interfaces users interact with the internet. While the potential for productivity gains is enormous, challenges are equally significant, including overcoming entrenched anti-bot mechanisms and ensuring security, trust, and responsible use. In the short term, improved agent reasoning capabilities, faster speeds, tighter integration with existing services, and advances in distributed networks are likely to gradually improve reliability. In the long term, we may see the gradual adoption of "agent-friendly" standards in scenarios where automation benefits both service providers and users. However, this transition will be uneven: adoption will be faster in automation-friendly environments like DeFi, while adoption will be slower in Web2 platforms that rely heavily on user interaction and control. In the future, tech companies will increasingly compete on how well their agents navigate real-world constraints, how securely they can integrate into critical workflows, and how reliably they can deliver results in diverse online environments. Whether this ultimately reshapes the "browser wars" will depend less on pure technological prowess than on building trust, aligning incentives, and demonstrating tangible value in everyday use.

JinseFinance

JinseFinance