Author: Scarlett Zhang; Source: X, @xiaohangzhang7

I feel more and more strongly that the crypto community is desperately trying to be seen by the AI community.

In the past six months, you'll find that the entire crypto community has been trying very hard to get close to AI. Talking about AI, switching to AI, holding AI events, making AI demos, revising AI narratives, they want every project to find a way to prove that it has some connection with AI.

It feels a lot like: a child desperately trying to squeeze onto an adult's table.

But what about the other side?

Many people truly working in AI have a very nuanced attitude towards crypto. It's not about openly criticizing or outright rejecting it, but rather a very dignified sense of detachment: "We're not opposed to blockchain, but we don't want to be too deeply involved with crypto for now."

"Technically, it's very interesting, but our clients and investors will mind." In other words: "You're a decent person, but I don't want to be involved in your circle." There is indeed a subtle hierarchy of disdain between these two circles, and it's not without reason. Many AI builders believe that AI is a genuine productivity revolution, technological progress, something that changes the way we work, the form of products, and the way information flows.

And crypto? In their eyes, it's more like an overly financialized, narrative-driven industry that's always looking for the next story to prove its importance, and incidentally, a convenient way to issue tokens and fleece investors. So when the crypto community suddenly started talking about AI on a large scale, many people in the AI community's first reaction was: Are you guys seriously making products, or are you just jumping on the bandwagon again? Frankly, I completely understand this reaction. Because over the past few years, crypto has indeed become too adept at packaging the "next wave."

DeFi/NFT/GameFi/SocialFi/DePIN/Inscription, now it's AI x Crypto. In each wave, someone sews the latest buzzword onto themselves and tells you the future is here. Over time, the outside world has developed a difficult-to-reverse impression of crypto: You're always talking about the future, but it always makes people wonder if you're actually creating value or just creating an atmosphere. This is why many people in the AI community today naturally feel they are in a superior position. They believe: AI is solving real problems; Crypto is still searching for new legitimacy. This bias is very real. This hierarchy of contempt certainly exists. But the more I think about it lately, the more interesting it becomes isn't why Crypto is so eager to get closer to AI, but rather another, more counterintuitive question: Will it ultimately be AI that truly needs the other? More precisely: It's not Crypto that needs AI.

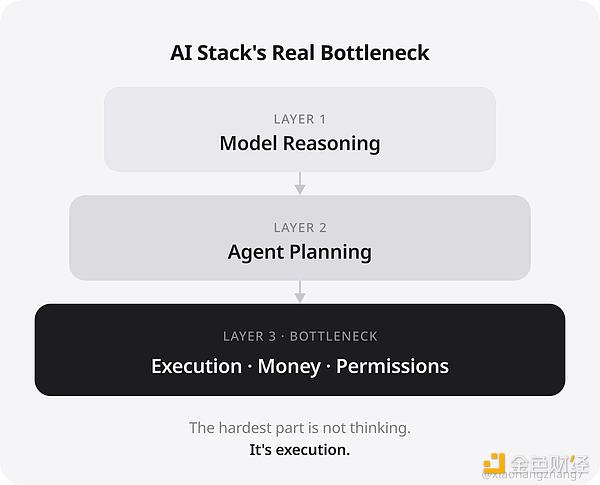

It's AI Agents that need Crypto. This isn't a question of "AI being smarter," but rather "Can AI manage money?" I'm increasingly convinced of this because many agent demos ultimately get stuck at the same point. Lately, everyone has probably seen quite a few demos: those that can write code, those that can call tools, those that can automatically browse web pages, those that can execute multi-step tasks, and even those that can handle transactions, payments, and automated workflows. At first glance, they seem very cool. But after seeing so many, I'm increasingly concerned about one question: Does it merely "know how," or can it actually "do"? Because the difference between "knowing how" and "doing" isn't just a matter of minor product details.

The difference lies in: **authority, funding, responsibility, and boundaries.** Having an agent compile a report for you and having an agent complete a real transaction are completely different issues.

If the former is wrong, you might at most think it's a bit stupid.

If the latter is wrong, your money is gone. Therefore, I increasingly feel that AI demos are most likely to create an illusion: **everything seems almost perfect.** But what's truly not perfect is often the most difficult layer. That is: the **execution layer**.

If an AI agent truly starts doing things for you, it will soon need to buy APIs, rent computing power, call paid services, execute transactions, manage budgets, transfer assets, and complete payments between different systems.

In other words, it doesn't just need to "understand your intentions." It needs to start participating in economic activities. And once it enters this layer, the problem changes.

Traditional finance can embrace automation, but it wasn't designed for an "Agent world." Many readers might be wondering, "Traditional finance can do these things too." I've certainly considered that, and frankly, traditional finance is indeed more mature than crypto in many dimensions.

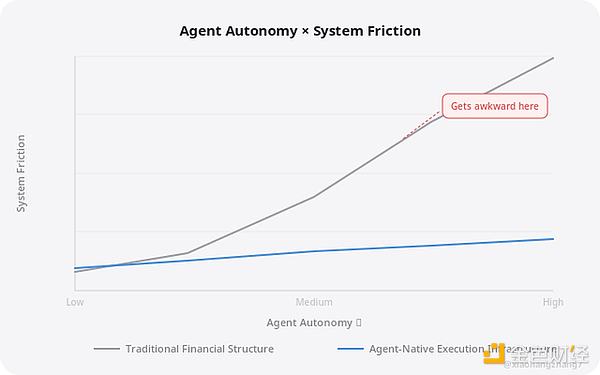

Risk control, auditing, access management, chains of responsibility, and traceability—traditional finance is stronger in these areas today. Therefore, the real point of this article isn't that crypto is better than traditional finance, nor that AI agents are completely ineffective without crypto. If it's just an internal enterprise agent or a platform agent, many things can certainly still be done using bank APIs, enterprise payment systems, virtual cards, approval workflows, sub-account systems, platform credits, and centralized escrow accounts. These all work, and are likely to remain mainstream in the short term. However, the problem lies in the fact that these systems are essentially built on the same premise: **The Agent is not a native executor. It is merely an automated appendage of a user, company, or platform.** This works fine in many scenarios.

But as agents become more autonomous, more cross-platform, more cross-border, and more reliant on native resource and fund access across different systems, traditional systems will become increasingly awkward. Therefore, the real issue isn't whether traditional finance can handle it, but whether it's the most natural, scalable, and natively agent-compatible structure. These are actually two completely different issues. The key to AI Agents isn't whether they are "legal entities," but rather that they are increasingly resembling "execution units." At this point, you might think, "But agents aren't third-class entities either. They're not people, nor are they companies; they're just software agents." That's true. Strictly speaking, an AI agent may not necessarily become an independent legal entity. Most of the time, it's more likely to be an agent for users, businesses, or platforms. Even so, it will increasingly resemble an execution unit that can be assigned **budgets, permissions, tasks, and boundaries**. This is the key point. The reason this problem hasn't fully surfaced today is because there aren't enough agents yet; many things are still in the stage of "humans watching it perform its tasks."

But if in the future there are truly large-scale agents: helping you with transactions,

helping you with procurement,

helping you with operations,

helping you manage budgets,

helping you automatically allocate resources between systems.

You'll encounter a very awkward problem: how exactly should these things be granted permissions? Whose account does it belong to? Whose payment authorization is it linked to? How much money can it spend? Who is responsible if it exceeds its authority? How is the underlying settlement handled when it calls services globally? Traditional finance can handle this.

But it will become increasingly awkward. Because it was never designed based on the premise that "software execution units will participate in economic activities on a large scale."

Once the protagonist is changed to an Agent, crypto's previously abstract concepts begin to become concrete

Many people who read crypto in the past felt that it was always talking about very vague terms:

Programmable funds

permissionless

global settlement

trustless execution

Many times it does sound like a bunch of nonsense.

But if you change the protagonist to an AI agent, these concepts suddenly become less abstract.

Because what the agent really needs might just be these things:

It needs a natively callable form of funds.

It needs an execution identity that doesn't have to first become a "company account".

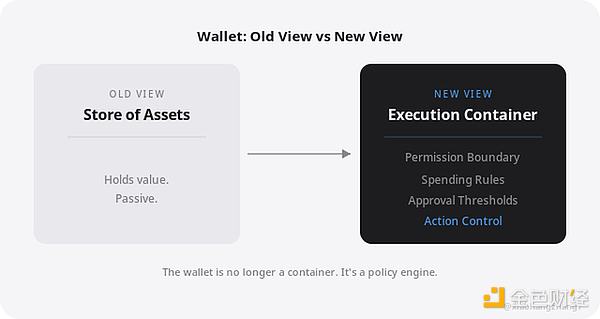

It requires budgets and permissions that can be programmatically constrained. It needs to achieve low-friction settlement globally. It needs to establish a native connection between invocation behavior and asset behavior. At this point, your perspective on a wallet will be completely different. A wallet is not just a "place to store coins." What is it more like? More like an execution container with permission boundaries.

It holds more than just assets.

It can also set rules: What is allowed? How much is allowed to spend? Which actions can be executed automatically? What thresholds require manual confirmation? Which scenarios are read-only, which are write-only? Which policies are effective on-chain, and which must be stopped? From this perspective, the relationship between AI and wallet is very interesting: AI is responsible for understanding, Wallet is responsible for constraints, and Agent is responsible for actions. This is what a complete system looks like. The real irony is: AI raises the question of trust, and crypto is precisely what it lacks most. If I were to take the side of an opponent, I would say: You just said that AI's real problem is trust, so why are you pointing to crypto as the answer? This criticism is reasonable.

After all, in the eyes of most ordinary people, crypto is not a "naturally trustworthy" system. Private key management is complex, on-chain transactions are irreversible, phishing and signature theft are rampant, contracts are risky, liability boundaries are often blurred, and there may not be anyone to cover for you after something goes wrong. Therefore, what I really want to express is not that crypto has solved the problem of trust. On the contrary. My judgment is that AI will force crypto to answer "trust" directly. In the past, crypto could remain at the level of "can be transferred, can be used, and can run."

But if it truly wants to become the execution layer of an AI agent, it must learn the most difficult lesson: Permission Model, Security Boundary, Attribution of Responsibility, Risk Control System, Recoverability, and Human-Machine Collaboration Confirmation Mechanism. In other words, AI won't automatically create crypto.

Instead, AI will force out all the most ambiguous, lazy, and narrative-driven aspects of crypto in the past. So I'm not saying crypto is already the answer.

I'm saying: **If agent-native execution infrastructure truly exists in the future, it will most likely resemble crypto more than today's traditional account systems.** Therefore, the question may never have been "How can crypto leverage AI to revive its popularity?" This is also a view that bothers me the most lately. Many people automatically interpret AI x Crypto as: crypto is just trying to piggyback on AI again.

Crypto wants to use AI to tell a new story.

Crypto needs AI to extend its life. I don't deny that there are indeed many projects like this in the market, quite a few. However, stopping at this level would miss a more fundamental layer: Once AI truly moves towards execution, it will inevitably encounter issues related to funding, authority, responsibility, identity, and settlement. These issues cannot be solved simply by making the model "more powerful."

They are essentially another layer of infrastructure problems. In other words, the further AI develops, the closer it will get to the problem domain that crypto excels at handling. This is not because crypto is more advanced than AI.

Rather, it's because when AI reaches into the real world, it inevitably encounters: How to manage the money, how to grant permissions, and how to ensure accountability. And this is precisely what prompts cannot solve. What AI truly lacks may not be greater intelligence, but greater trustworthiness. I increasingly feel that the most difficult part of AI × Crypto has never been intelligence, but trust. You could create a stunning demo: A single sentence to complete swap operations. A single sentence to complete bridge operations. A single sentence to automatically configure assets. Automatic on-chain execution. It certainly looks futuristic. But would users really dare to use it? Even if they dared to try it once, would they dare to use it long-term? Even if they dared to use it long-term, how would liability be determined if problems arose? Would the product team dare to make such a commitment? Would the platform dare to provide a safety net? Would the developers dare to grant higher privileges? So ultimately you'll find that what truly limits AI agents from entering the world of finance and assets isn't whether they're smart enough. Rather, it's whether they're sufficiently constrained. Who can define their boundaries? Who can verify their actions? Who can stop them before risks occur? Who can clarify responsibility after risks occur? Therefore, what will truly be scarce in the future may not be the strongest model, nor the most eloquent agent. Rather, it's the most trustworthy execution layer. This is why I increasingly believe this statement: AI agents need crypto, not the other way around. To be more precise: Not all AI needs crypto.

Not all agent scenarios need crypto.

Neither does crypto provide a mature solution.

But I increasingly believe that: As AI agents move towards real execution, real assets, real permissions, and real responsibility, they will increasingly need an infrastructure more like crypto. It doesn't need more concepts. What it needs are: programmable funds, programmable permissions, programmable identity, native global settlement, and verifiable execution boundaries. These are precisely the few areas where crypto is not just empty talk. Therefore, in a sense, I don't think the current hierarchy of disdain for crypto within the AI community is entirely unreasonable. However, I increasingly suspect that this is more likely because both sides are on different timelines. Today, the AI community is most concerned with models, products, distribution, and efficiency.

The crypto community, however, has been preoccupied with issues of assets, permissions, custody, settlement, and liability for much longer. Everyone seems to be discussing the future.

But they're not actually discussing the same level of the future. The AI community might find crypto too narrative-driven, too financial, and too speculative.

The crypto community might feel that the AI community hasn't truly encountered the most difficult implementation problems yet. In a sense, neither side is entirely wrong. However, I increasingly feel that when AI agents truly begin to participate in economic activities on a large scale, this seemingly stable hierarchy of disdain may slowly reverse. At that time, the question might no longer be: Why does crypto always want to gravitate towards AI? Instead, the question becomes: Without a more suitable execution infrastructure for agents, how can AI agents truly enter the real world?

JinseFinance

JinseFinance

JinseFinance

JinseFinance JinseFinance

JinseFinance JinseFinance

JinseFinance JinseFinance

JinseFinance JinseFinance

JinseFinance JinseFinance

JinseFinance JinseFinance

JinseFinance JinseFinance

JinseFinance JinseFinance

JinseFinance