This study aims to explore which AI fields are most important to developers, and which may be the next opportunities for explosion in Web3 and AI.

Before sharing new research insights, first of all, we are very happy to participate in RedPill's first round of financing totaling $5 million. We are also very excited and look forward to growing together with RedPill in the future!

TL;DR

As the combination of Web3 and AI has become a hot topic in the cryptocurrency world, the construction of AI infrastructure in the crypto world has flourished, but there are not many applications that actually use AI or are built for AI, and the homogeneity of AI infrastructure has gradually emerged. The first round of financing of RedPill, in which we recently participated, has triggered some deeper understanding.

The main tools for building AI Dapps include decentralized OpenAI access, GPU networks, inference networks, and agent networks.

GPU networks are more popular than the “Bitcoin mining era” because: the AI market is larger and growing rapidly and steadily; AI supports millions of applications every day; AI requires a variety of GPU models and server locations; the technology is more mature than in the past; and the customer base is also wider.

Inference networks and agent networks have similar infrastructure, but different focuses. Inference networks are mainly for experienced developers to deploy their own models, and GPUs are not necessarily required to run non-LLM models. Agent networks are more focused on LLM, developers do not need to bring their own models, but focus more on hint engineering and how to connect different agents. Agent networks always require high-performance GPUs.

AI infrastructure projects have huge promises and are still introducing new features.

Most native crypto projects are still in the testnet stage, with poor stability, complex configuration, limited functions, and time to prove their security and privacy.

Assuming AI Dapp becomes a big trend, there are still many undeveloped areas, such as monitoring, RAG-related infrastructure, Web3 native models, decentralized agents with built-in crypto native APIs and data, evaluation networks, etc.

Vertical integration is a significant trend. Infrastructure projects try to provide one-stop services to simplify the work of AI Dapp developers.

The future will be hybrid. Part of the reasoning is done on the front end, and part of it is calculated on the chain, which can take into account cost and verifiability factors.

Source: IOSG

Introduction

The combination of Web3 and AI is one of the most watched topics in the current crypto space. Talented developers are building AI infrastructure for the crypto world, working to bring intelligence to smart contracts. Building AI dApps is an extremely complex task, and developers need to deal with data, models, computing power, operations, deployment, and integration with blockchain. In response to these needs, Web3 founders have developed many preliminary solutions, such as GPU networks, community data annotation, community trained models, verifiable AI reasoning and training, and proxy stores.

Against this thriving infrastructure, there are not many applications that actually utilize AI or are built for AI. When developers look for AI dApp development tutorials, they find that there are not many tutorials related to native encrypted AI infrastructure, and most tutorials only involve calling OpenAI APIs on the front end.

Source: IOSG Ventures

Current applications fail to fully utilize the decentralized and verifiable features of blockchain, but this situation will soon change. Now, most of the AI infrastructures focused on the crypto space have launched test networks and plan to be officially operational in the next 6 months.

This study will provide a detailed introduction to the main tools available in the AI infrastructure in the crypto space. Let's get ready for the GPT-3.5 moment in the crypto world!

1. RedPill: Providing decentralized authorization for OpenAI

The RedPill we participated in as mentioned above is a good introduction point.

OpenAI has several world-class powerful models, such as GPT-4-vision, GPT-4-turbo, and GPT-4o, which are the best choice for building advanced AI Dapps.

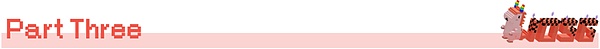

Developers can call the OpenAI API through an oracle or front-end interface to integrate it into their dApp.

RedPill integrates OpenAI APIs from different developers under one interface, providing fast, economical and verifiable AI services to users around the world, thereby democratizing access to top AI model resources. RedPill's routing algorithm will direct developer requests to a single contributor. API requests will be executed through its distribution network, bypassing any possible restrictions from OpenAI, solving some common problems faced by crypto developers, such as:

Limited TPM (Tokens Per Minute): New accounts have limited use of tokens, which cannot meet the needs of popular and AI-dependent dApps.

Access restrictions: Some models restrict access to new accounts or certain countries.

By using the same request code but changing the hostname, developers can access OpenAI models in a low-cost, highly scalable and unlimited way.

2. GPU Network

In addition to using OpenAI's API, many developers choose to host models at home. They can rely on decentralized GPU networks, such as io.net, Aethir, Akash and other popular networks, to build GPU clusters and deploy and run various powerful internal or open source models.

Such decentralized GPU networks can provide flexible configurations, more server location options, and lower costs with the computing power of individuals or small data centers, allowing developers to easily conduct AI-related experiments within a limited budget. However, due to the decentralized nature, such GPU networks still have certain limitations in functionality, usability, and data privacy.

In the past few months, the demand for GPUs has been hot, exceeding the previous Bitcoin mining boom. The reasons for this phenomenon include:

With an increase in target customers, GPU networks now serve AI developers, who are not only large in number but also more loyal and will not be affected by cryptocurrency price fluctuations.

Compared to mining-specific equipment, decentralized GPUs offer more models and specifications, and can better meet the requirements of the industry. In particular, large-scale model processing requires higher VRAM, while small tasks have more suitable GPUs to choose from. At the same time, decentralized GPUs can serve end users at close range and reduce latency.

The technology is becoming more mature, and the GPU network relies on high-speed blockchains such as Solana settlement, Docker virtualization technology, and Ray computing clusters.

In terms of return on investment, the AI market is expanding, and there are many opportunities for the development of new applications and models. The expected return rate of the H100 model is 60-70%, while Bitcoin mining is more complex, winner-takes-all, and limited output.

Bitcoin mining companies such as Iris Energy, Core Scientific, and Bitdeer have also begun to support GPU networks, provide AI services, and actively purchase GPUs designed for AI, such as H100.

Recommendation:For Web2 developers who don't pay much attention to SLA, io.net provides a simple and easy-to-use experience and is a cost-effective choice.

3. Inference Network

This is the core of the crypto-native AI infrastructure. It will support billions of AI inference operations in the future. Many AI layer1 or layer2 provide developers with the ability to call AI inference natively on the chain. Market leaders include Ritual, Valence, and Fetch.ai.

These networks differ in the following aspects:

Performance (latency, compute time)

Supported models

Verifiability

Price (on-chain consumption cost, inference cost)

Development experience

3.1 Goals

Ideally, developers can easily access custom AI inference services from anywhere, with any form of proof, with almost no friction in the integration process.

The inference network provides all the basic support developers need, including on-demand proof generation and verification, inference calculation, relay and verification of inference data, providing Web2 and Web3 interfaces, one-click model deployment, system monitoring, cross-chain operations, synchronous integration, and scheduled execution.

Source: IOSG Ventures

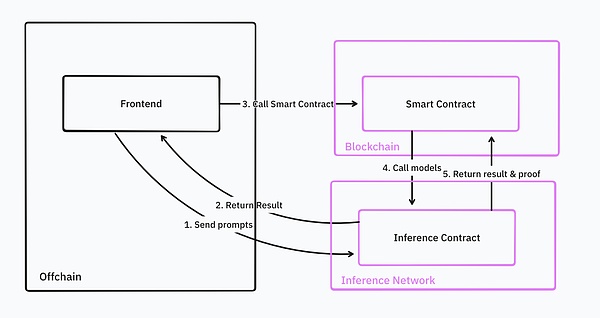

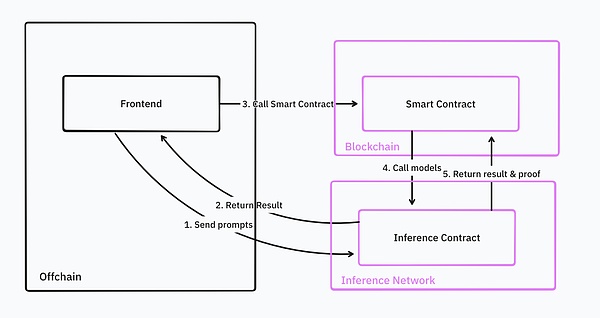

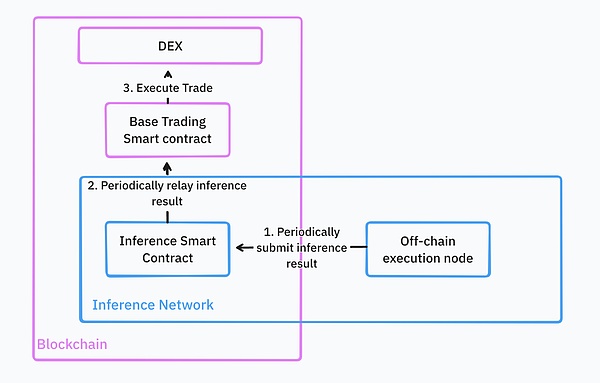

With these features, developers can seamlessly integrate inference services into their existing smart contracts. For example, when building DeFi trading robots, these robots use machine learning models to find buy and sell opportunities for specific trading pairs and execute corresponding trading strategies on the underlying trading platform.

In a completely ideal state, all infrastructure is cloud-hosted. Developers only need to upload their trading strategy models in a common format such as torch, and the inference network will store and provide the model for Web2 and Web3 queries.

Once all model deployment steps are completed, developers can call model inference directly through the Web3 API or smart contracts. The inference network will continue to execute these trading strategies and feed the results back to the underlying smart contract. If the developer manages a large amount of community funds, they will also need to provide verification of the inference results. Once the inference results are received, the smart contract will trade according to these results.

Source: IOSG Ventures

3.1.1 Asynchronous and synchronous

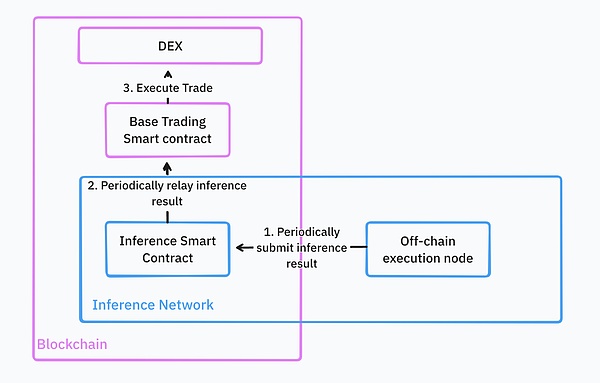

In theory, asynchronously executed reasoning operations can bring better performance; however, this approach may be inconvenient in terms of development experience.

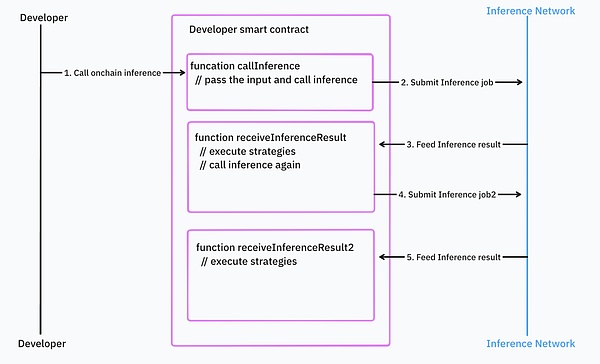

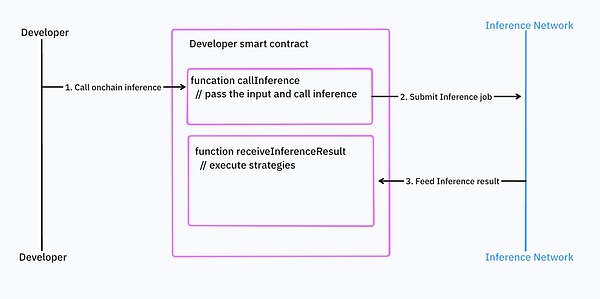

When using the asynchronous approach, developers need to first submit the task to the smart contract of the reasoning network. When the reasoning task is completed, the smart contract of the reasoning network will return the result. In this programming mode, the logic is divided into two parts: reasoning call and reasoning result processing.

Source: IOSG Ventures

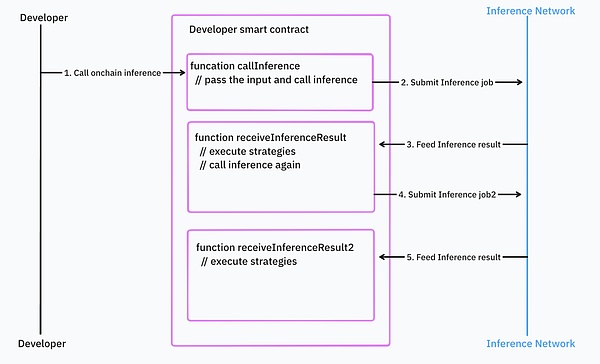

If developers have nested inference calls and a lot of control logic, the situation will be even worse.

Source: IOSG Ventures

The asynchronous programming model makes it difficult to integrate with existing smart contracts. This requires developers to write a lot of additional code and do error handling and manage dependencies.

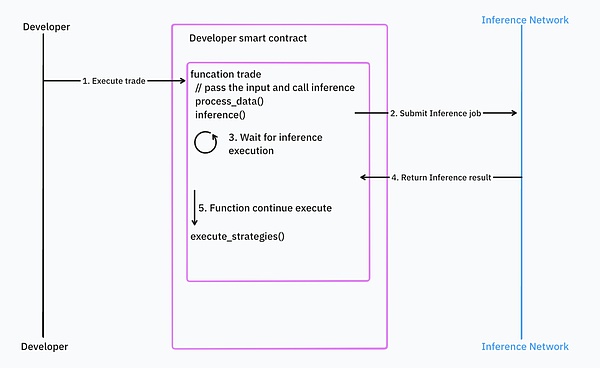

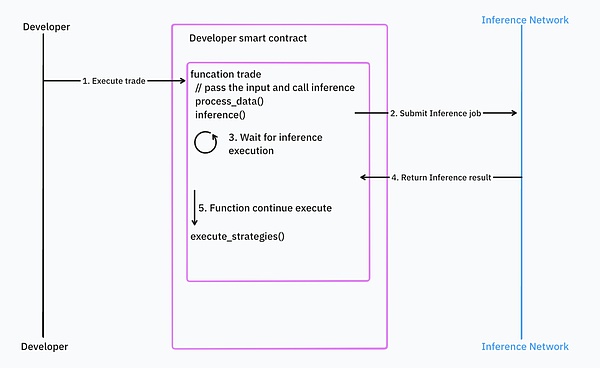

Relatively speaking, synchronous programming is more intuitive for developers, but it introduces problems in response time and blockchain design. For example, if the input data is fast-changing data such as block time or price, then the data is no longer fresh after the inference is completed, which may cause the execution of the smart contract to be rolled back in certain circumstances. Imagine that you use an outdated price to make a transaction.

Source: IOSG Ventures

Most AI infrastructure uses asynchronous processing, but Valence is trying to solve these problems.

3.2 Reality

In reality, many new reasoning networks are still in the testing phase, such as the Ritual network. According to their public documents, these networks currently have limited functionality (functions such as verification and proof are not yet online). They currently do not provide a cloud infrastructure to support on-chain AI computing, but instead provide a framework for self-hosted AI computing and passing the results to the chain.

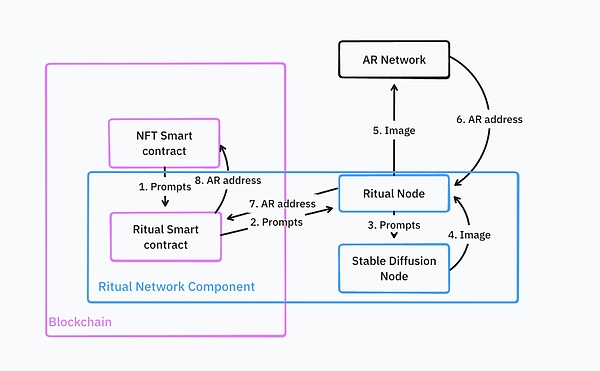

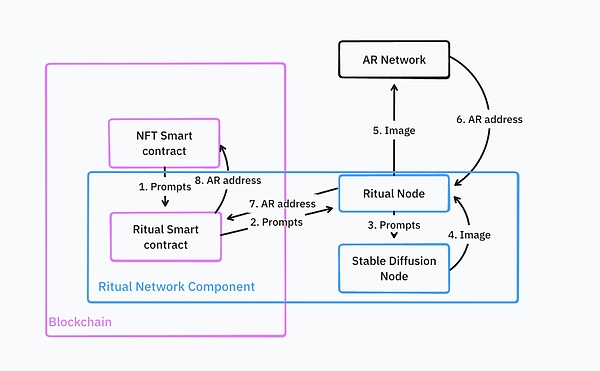

This is an architecture for running AIGC NFT. The diffusion model generates NFTs and uploads them to Arweave. The reasoning network will use this Arweave address to mint the NFT on the chain.

Source: IOSG Ventures

This process is very complex, and developers need to deploy and maintain most of the infrastructure themselves, such as Ritual nodes, Stable Diffusion nodes, and NFT smart contracts with customized service logic.

Recommendation: The current inference network is quite complex in integrating and deploying custom models, and most networks do not support verification at this stage. Applying AI technology to the front end will provide developers with a relatively simple option. If you really need verification, ZKML provider Giza is a good choice.

4. Agent Network

Agent Network allows users to easily customize agents. Such a network consists of entities or smart contracts that can autonomously perform tasks, interact with each other and the blockchain network without direct human intervention. It is mainly aimed at LLM technology. For example, it can provide a GPT chatbot that has a deep understanding of Ethereum. The current tools for such chatbots are relatively limited, and developers cannot yet develop complex applications based on them.

Source: IOSG Ventures

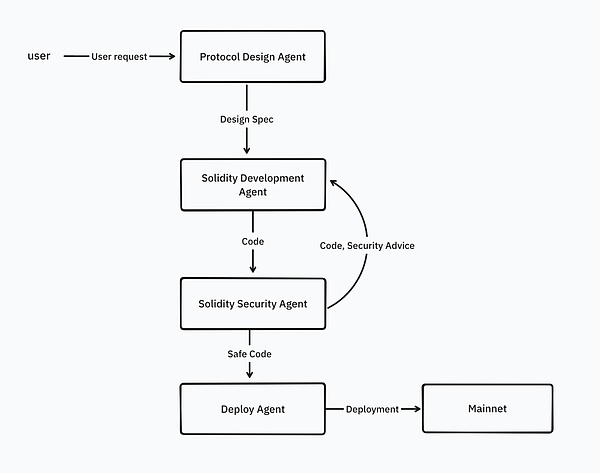

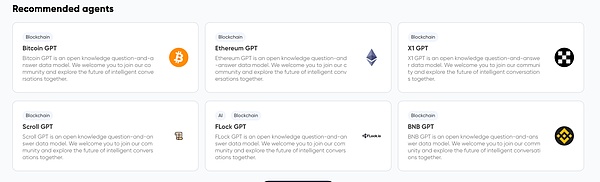

But in the future, the agent network will provide more tools for agents to use, not just knowledge, but also the ability to call external APIs and perform specific tasks. Developers will be able to connect multiple agents to build workflows. For example, writing a Solidity smart contract involves multiple specialized agents, including a protocol design agent, a Solidity development agent, a code security review agent, and a Solidity deployment agent.

Source: IOSG Ventures

We coordinate the cooperation of these agents by using prompts and scenarios.

Some examples of agent networks include Flock.ai, Myshell, Theoriq.

Recommendation:Most of today's agents have relatively limited capabilities. For specific use cases, Web2 agents can better serve and have mature orchestration tools, such as Langchain, Llamaindex.

5. Differences between Proxy Network and Inference Network

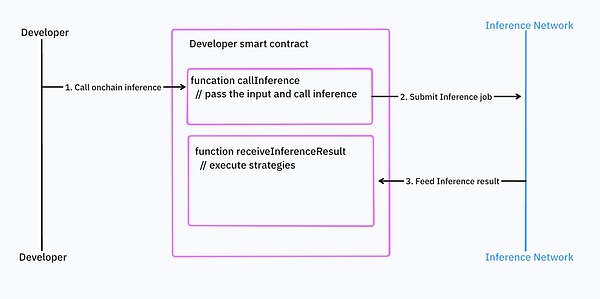

Proxy Network focuses more on LLM and provides tools such as Langchain to integrate multiple agents. Usually, developers do not need to develop machine learning models themselves, and the proxy network has simplified the process of model development and deployment. They only need to link the necessary agents and tools. In most cases, end users will use these agents directly.

Inference Network is the infrastructure support for Proxy Network. It provides developers with lower-level access rights. Normally, end users do not use Inference Network directly. Developers need to deploy their own models, which is not limited to LLM, and they can use them through off-chain or on-chain access points.

Proxy Network and Inference Network are not completely independent products. We have already started to see some vertically integrated products. They provide both proxy and reasoning capabilities because both functions rely on similar infrastructure.

6. New opportunities

In addition to model reasoning, training, and proxy networks, there are many new areas worth exploring in the web3 field:

Datasets: How to turn blockchain data into a dataset that can be used by machine learning? Machine learning developers need more specific and thematic data. For example, Giza provides some high-quality datasets on DeFi specifically for machine learning training. Ideally, data should not only be simple tabular data, but also include graphical data that can describe the interactions in the blockchain world. Currently, we are still lacking in this regard. Some projects are currently addressing this problem by rewarding individuals to create new datasets, such as Bagel and Sahara, which promise to protect the privacy of personal data.

Model storage: Some models are large in size, and how to store, distribute and version these models is key, which is related to the performance and cost of on-chain machine learning. In this area, pioneering projects such as Filecoin, AR and 0g have made progress.

Model training: Distributed and verifiable model training is a difficult problem. Gensyn, Bittensor, Flock and Allora have made significant progress.

Monitoring: Since model reasoning occurs both on-chain and off-chain, we need new infrastructure to help web3 developers track model usage and identify potential problems and deviations in a timely manner. With the right monitoring tools, web3 machine learning developers can make timely adjustments and continuously optimize model accuracy.

RAG Infrastructure: Distributed RAGs require a completely new infrastructure environment with high requirements for storage, embedded computing, and vector databases, while ensuring data privacy and security. This is very different from the current Web3 AI infrastructure, which mostly relies on third parties to complete RAGs, such as Firstbatch and Bagel.

Models tailored for Web3: Not all models are suitable for Web3 scenarios. In most cases, models need to be retrained for specific applications such as price prediction and recommendations. As AI infrastructure prospers, we expect more web3 native models to serve AI applications in the future. For example, Pond is developing blockchain GNNs for price prediction, recommendation, fraud detection, and anti-money laundering.

Evaluation Networks: It is not easy to evaluate agents without human feedback. As agent creation tools become more popular, countless agents will appear on the market. This requires a system to demonstrate the capabilities of these agents and help users judge which agent performs best in a specific situation. For example, Neuronets is a player in this field.

Consensus Mechanism: For AI tasks, PoS is not necessarily the best choice. Computational complexity, difficulty in verification, and lack of certainty are the main challenges facing PoS. Bittensor has created a new smart consensus mechanism that rewards nodes in the network that contribute to machine learning models and outputs.

7. Future Outlook

We are currently observing a trend towards vertical integration. By building a basic computing layer, the network can support a variety of machine learning tasks, including training, reasoning, and proxy network services. This model is intended to provide a comprehensive one-stop solution for machine learning developers in Web3.

Currently, although on-chain reasoning is costly and slow, it provides excellent verifiability and seamless integration with back-end systems (such as smart contracts). I think the future will move towards hybrid applications. Part of the reasoning processing will be done on the front end or off-chain, while those critical, decision-making reasoning will be done on the chain. This model has been applied on mobile devices. By leveraging the intrinsic characteristics of mobile devices, it is able to run small models quickly locally and move more complex tasks to the cloud to be processed by larger LLMs.

Catherine

Catherine