Original title: Glue and coprocessor architectures

Author: Vitalik, founder of Ethereum; Translator: Deng Tong, Golden Finance

Special thanks to Justin Drake, Georgios Konstantopoulos, Andrej Karpathy, Michael Gao, Tarun Chitra and various Flashbots contributors for their feedback and comments.

If you analyze any resource-intensive computation going on in the modern world in moderate detail, one feature you will find again and again is that computation can be divided into two parts:

These two forms of computation are best handled in different ways: the former, whose architecture may be less efficient but needs to be very general; the latter, whose architecture may be less general but needs to be very efficient.

What are some examples of this different approach in practice?

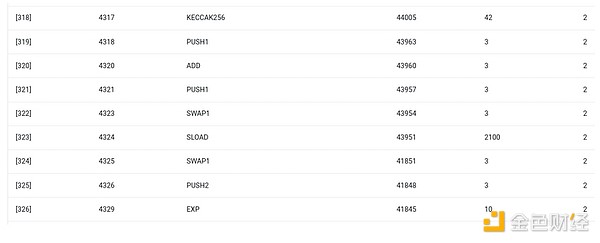

First, let’s look at the environment I’m most familiar with: the Ethereum Virtual Machine (EVM). Here’s a geth debug trace of a recent Ethereum transaction I made: Updating the IPFS hash of my blog on ENS. The transaction consumed a total of 46,924 gas, which can be broken down as follows:

Base cost: 21,000

Call data: 1,556

EVM execution: 24,368

SLOAD opcode: 6,400

SSTORE opcode: 10,100

LOG opcode: 2,149

Other: 6,719

In the EVM, these two forms of execution are handled differently. High-level business logic is written in a higher-level language, typically Solidity, which compiles to the EVM. Expensive work is still triggered by EVM opcodes (SLOAD, etc.), but 99%+ of the actual computation is done in dedicated modules written directly inside client code (or even libraries).

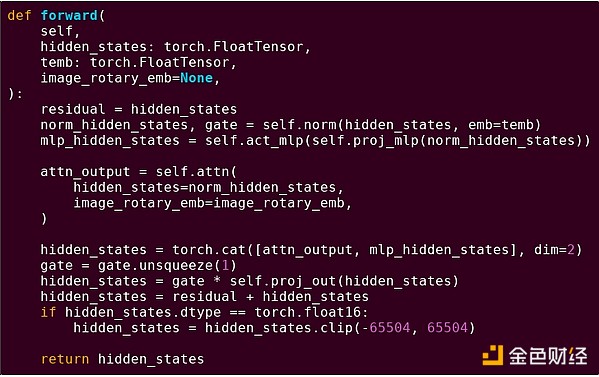

To reinforce our understanding of this pattern, let's explore it in another context: AI code written in python using torch.

Forward pass of one block of the transformer model

What do we see here? We see a relatively small amount of "business logic" written in Python, which describes the structure of the operation being performed. In a real application, there would be another type of business logic that determines details such as how to obtain inputs and what operations are performed on the outputs. However, if we drill down into each individual operation itself (the individual steps inside self.norm, torch.cat, +, *, self.attn, ...), we see vectorized computation: the same operation is calculated in parallel for a large number of values. Similar to the first example, a small portion of the computation is for business logic, and the majority of the computation is for performing large structured matrix and vector operations — in fact, most of it is just matrix multiplications.

Just like in the EVM example, these two types of work are handled in two different ways. The high-level business logic code is written in Python, a highly general and flexible language, but also very slow, and we simply accept the inefficiency because it only accounts for a small portion of the total computational cost. Meanwhile, the intensive operations are written in highly optimized code, typically CUDA code that runs on GPUs. We are even increasingly starting to see LLM inference being done on ASICs.

Modern programmable cryptography, like SNARKs, again follows a similar pattern on two levels. First, the prover can be written in a high-level language where the heavy lifting is done with vectorized operations, just like in the AI example above. The circular STARK code I showed this in action here. Second, the programs that are executed inside the cryptography itself can be written in a way that is partitioned between general business logic and highly structured expensive work.

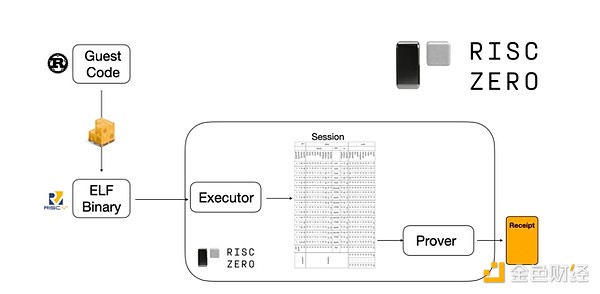

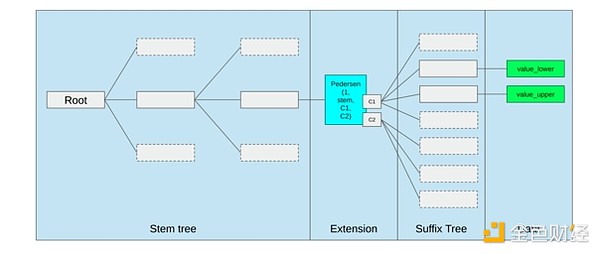

To understand how this works, we can look at one of the latest trends in STARK proofs. To be general and easy to use, teams are increasingly building STARK provers for widely adopted minimal virtual machines, such as RISC-V. Any program that needs to have its execution proven can be compiled into RISC-V, and then the prover can prove the RISC-V execution of that code.

Diagram from the RiscZero documentation

This is very convenient: it means we only have to write the proof logic once, and from then on, any program that needs a proof can be written in any "traditional" programming language (RiscZero supports Rust, for example). However, there is a problem: this approach incurs a lot of overhead. Programmable crypto is already very expensive; adding the overhead of running code in a RISC-V interpreter is too much. So the developers came up with a trick: identify the specific expensive operations that make up the bulk of the computation (usually hashing and signing), and then create specialized modules to prove these operations very efficiently. Then you just combine the inefficient but general RISC-V proof system with the efficient but specialized proof system and you get the best of both worlds.

Programmable crypto beyond ZK-SNARKs, such as multi-party computation (MPC) and fully homomorphic encryption (FHE), can likely be optimized using similar approaches.

What’s the picture in general?

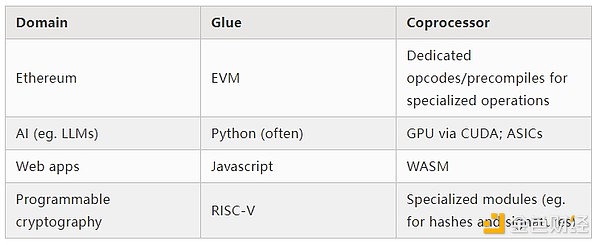

Modern computing increasingly follows what I call a glue and coprocessor architecture: you have some central “glue” component that is high general but low efficiency, which is responsible for passing data between one or more coprocessor components that are low general but high efficiency. This is a simplification: in practice, the trade-off curve between efficiency and generality almost always has more than two levels. GPUs and other chips, often called “coprocessors” in the industry, are less general than CPUs, but more general than ASICs. The trade-offs in degree of specialization are complex, and depend on predictions and intuitions about which parts of an algorithm will remain the same in five years, and which parts will change in six months. We often see similar multiple layers of specialization in ZK proof architectures. But for a broad mental model, it’s sufficient to consider two levels. There are similar situations in many areas of computing:

From the above examples, it certainly seems like a natural law that computation can be split in this way. In fact, you can find examples of computation specialization going back decades. However, I think this separation is increasing. And I think there are reasons for this:

We have only recently reached the limits of CPU clock speed increases, so further gains can only be made through parallelization. However, parallelization is difficult to reason about, so it is often more practical for developers to continue to reason about sequentially and let parallelization happen in the backend, wrapped in dedicated modules built for specific operations.

Computation has only recently become so fast that the computational cost of business logic has become truly negligible. In this world, it also makes sense to optimize the VM where the business logic runs for goals other than computational efficiency: developer friendliness, familiarity, security, and other similar goals. Meanwhile, specialized "coprocessor" modules can continue to be designed for efficiency, and derive their security and developer friendliness from their relatively simple "interface" to the binder.

It is becoming increasingly clear what the most important expensive operations are. This is most evident in cryptography, where specific types of expensive operations are most likely to be used: modular operations, elliptic curve linear combinations (aka multi-scalar multiplication), fast Fourier transforms, and so on. This is also becoming increasingly clear in AI, where for more than two decades, most computation has been "mostly matrix multiplication" (albeit at varying levels of precision). Similar trends are occurring in other areas. There are far fewer unknown unknowns in (compute-intensive) computations than there were 20 years ago.

What does this mean?

A key point is that gluers should be optimized to be good gluers, and coprocessors should be optimized to be good coprocessors. We can explore the implications of this in a few key areas.

EVM

Blockchain virtual machines (e.g., EVM) do not need to be efficient, just familiar. With the addition of the right coprocessors (aka "precompiles"), computations in an inefficient VM can actually be as efficient as computations in a native, efficient VM. For example, the overhead incurred by the EVM's 256-bit registers is relatively small, while the benefits of the EVM's familiarity and existing developer ecosystem are large and lasting. Development teams optimizing the EVM have even found that lack of parallelization is not generally a major barrier to scalability.

The best way to improve the EVM may simply be to (i) add better precompiles or specialized opcodes, e.g. some combination of EVM-MAX and SIMD might be reasonable, and (ii) improve storage layout, e.g., changes to Verkle trees have, as a side effect, greatly reduced the cost of accessing storage slots that are adjacent to each other.

Storage optimizations in Ethereum's Verkle tree proposal, putting adjacent storage keys together and adjusting gas costs to reflect this. Optimizations like this, coupled with better precompiles, may be more important than tweaking the EVM itself.

Secure Computing and Open Hardware

A big challenge in improving the security of modern computing at the hardware level is its overly complex and proprietary nature: chips are designed to be efficient, which requires proprietary optimizations. Backdoors are easy to hide, and side-channel vulnerabilities are constantly being discovered.

There continues to be an effort to push for more open, more secure alternatives from multiple angles. Some computing is increasingly done in trusted execution environments, including on users’ phones, which has improved security for users. The push for more open source consumer hardware continues, with some recent wins, such as a RISC-V laptop running Ubuntu.

RISC-V laptop running Debian

However, efficiency remains an issue. The author of the above linked article writes:

Newer open source chip designs such as RISC-V cannot possibly compete with processor technology that has been around and improved for decades. Progress always has to start somewhere.

More paranoid ideas, such as this design of building a RISC-V computer on an FPGA, face greater overhead. But what if glue and coprocessor architectures mean that this overhead is not actually significant? What if we accept that open and secure chips will be slower than proprietary chips, even giving up common optimizations like speculative execution and branch prediction if necessary, but try to make up for this by adding (proprietary if necessary) ASIC modules that are used for specific types of computation that are most intensive? Sensitive computations can be done in the "main chip", which will be optimized for security, open source design, and side-channel resistance. More intensive computations (e.g. ZK proofs, AI) will be done in ASIC modules, which will know less information about the computation being performed (potentially, through cryptographic blinding, and possibly even zero information in some cases).

Cryptography

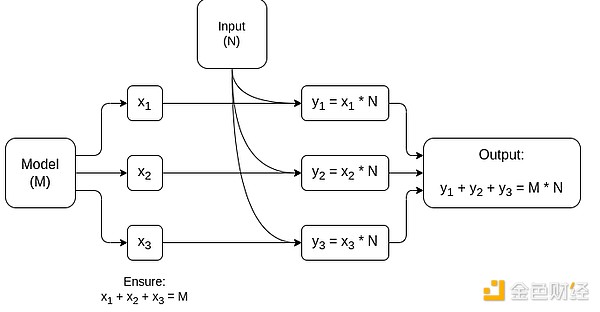

Another key point is that all of this is very optimistic about cryptography, and especially programmable cryptography, becoming mainstream. We’ve already seen super-optimized implementations of certain highly structured computations in SNARK, MPC, and other settings: some hash functions are only a few hundred times more expensive than running the computation directly, and AI (mostly matrix multiplication) has very low overhead. Further improvements such as GKR may reduce this further. Fully general VM execution, especially when executed in a RISC-V interpreter, will probably continue to have an overhead of about ten thousand times, but for the reasons described in the paper this doesn’t matter: as long as the most intensive parts of the computation are handled separately using efficient, specialized techniques, the total overhead is manageable.

A simplified diagram of a specialized MPC for matrix multiplication, the largest component in AI model inference. See this article for more details, including how to keep models and inputs private.

One exception to the idea that “glue layers only need to be familiar, not efficient” is latency, and to a lesser extent data bandwidth. If the computation involves heavy operations on the same data dozens of times, as in cryptography and AI, then any latency caused by an inefficient glue layer can become a major bottleneck to runtime. Therefore, glue layers also have efficiency requirements, although these are more specific.

Conclusion

Overall, I think the trends described above are very positive developments from multiple perspectives. First, it is a reasonable approach to maximize computational efficiency while remaining developer-friendly, and being able to get more of both is good for everyone. In particular, by enabling specialization on the client side to improve efficiency, it improves our ability to run sensitive and performance-demanding computations (e.g. ZK proofs, LLM reasoning) locally on user hardware. Second, it creates a huge window of opportunity to ensure that the pursuit of efficiency does not compromise other values, most notably security, openness, and simplicity: side-channel security and openness in computer hardware, reduced circuit complexity in ZK-SNARKs, and reduced complexity in virtual machines. Historically, the pursuit of efficiency has caused these other factors to take a backseat. With glue and coprocessor architectures, it no longer has to. One part of the machine optimizes for efficiency, another part optimizes for generality and other values, and the two work together.

This trend is also very good for cryptography, because cryptography itself is a prime example of "expensive structured computation," and this trend accelerates that. This adds another opportunity for improved security. In the blockchain world, improved security is also possible: we can worry less about optimizing the VM and more about optimizing precompiles and other features that coexist with the VM.

Third, this trend provides opportunities for smaller, newer players to participate.If computation becomes less monolithic and more modular, this will greatly reduce the barrier to entry. Even ASICs that use one type of computation have the potential to make a difference. This is also true in the field of ZK proofs and EVM optimization. It becomes easier and more accessible to write code with near-cutting-edge efficiency. It becomes easier and more accessible to audit and formally verify such code. Finally, as these very different areas of computing converge on some common patterns, there is room for more collaboration and learning between them.

JinseFinance

JinseFinance

JinseFinance

JinseFinance JinseFinance

JinseFinance JinseFinance

JinseFinance JinseFinance

JinseFinance JinseFinance

JinseFinance Alex

Alex Jixu

Jixu Bitcoinist

Bitcoinist Bitcoinist

Bitcoinist Bitcoinist

Bitcoinist