Discussion on BTC block size, transaction size, opcode quantity limit and other issues

Bitcoin has a block size limit of 1M transaction block + 3M signature block. There are size and opcode number restrictions for each transaction.

JinseFinance

JinseFinance

Author: Vitalik, founder of Ethereum; Translator: Deng Tong, Golden Finance

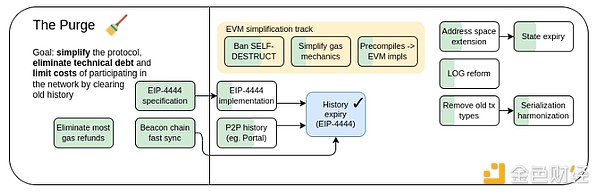

Note: This article is the fifth part of the series of articles recently published by Vitalik, founder of Ethereum, on “The Future Development of the Ethereum Protocol”, “Possible futures of the Ethereum protocol, part 5: The Purge”. For the fourth part, see “Vitalik: The Future of Ethereum The Verge”. For the third part, see "Vitalik: Key goals of Ethereum's The Scourge phase", for the second part, see "Vitalik: How should the Ethereum protocol develop in The Surge phase", and for the first part, see "What else can be improved in Ethereum PoS". The following is the full text of the fifth part:

Special thanks to Justin Drake, Tim Beiko, Matt Garnett, Piper Merriam, Marius van der Wijden and Tomasz Stanczak for their feedback and comments.

One of the challenges facing Ethereum is that, by default, the expansion and complexity of any blockchain protocol will increase over time. This happens in two ways:

Historical data: Any transaction and any account created at any historical moment needs to be stored permanently by all clients and downloaded by any new client that fully syncs with the network. This causes client load and sync time to continue to increase over time, even if the capacity of the chain remains constant.

Protocol features: It is much easier to add new features than to remove old ones, causing code complexity to increase over time.

For Ethereum to be sustainable in the long term, we need to exert strong counter-pressure on both of these trends, reducing complexity and bloat over time. But at the same time, we need to preserve one of the key properties of blockchains: persistence. You can put an NFT, a love letter in transaction call data, or a smart contract containing a million dollars on the chain, go into a cave for ten years, and come out to find it is still there waiting for you to read and interact with it. In order for dapps to feel comfortable fully decentralizing and removing upgrade keys, they need to be confident that their dependencies won’t be upgraded in a way that breaks them — especially L1 itself.

The Purge, 2023 Roadmap.

It is absolutely possible to strike a balance between these two needs, minimizing or reversing bloat, complexity, and decay while maintaining continuity, if we put our minds to it. Organisms can do this: while most organisms age over time, a fortunate few do not. Even social systems can have extremely long lifespans. Ethereum has already succeeded in some cases: proof of work has disappeared, the SELFDESTRUCT opcode has largely disappeared, and beacon chain nodes have been storing old data for up to six months. Finding this path for Ethereum more generally, and moving toward a long-term stable end result, is the ultimate challenge for Ethereum’s long-term scalability, technical sustainability, and even security.

The Purge: Primary Goals

Reduce client storage requirements by reducing or eliminating the need for each node to store all history permanently, and perhaps even eventually

Reduce protocol complexity by eliminating unneeded functionality

As of this writing, a fully synced Ethereum node requires about 1.1 TB of disk space for the execution client, and a few hundred GB more for the consensus client. The vast majority of this is historical data: data about historical blocks, transactions, and receipts, much of which is many years old. This means that even if the gas limit didn't increase at all, the size of the node would increase by hundreds of GB per year.

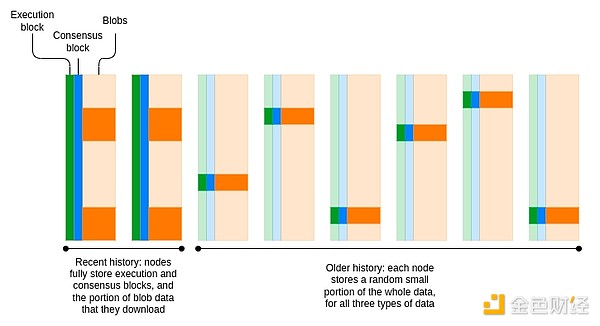

A key simplifying property of the history storage problem is that, since each block points to the previous block via hash links (and other structures), consensus on the present is sufficient to reach consensus on the history. As long as the network agrees on the latest block, any historical block, transaction, or state (account balance, nonce, code, storage) can be provided by any single participant, accompanied by a Merkle proof, and that proof allows anyone else to verify its correctness. While consensus is an N/2-of-N trust model, history is a 1-of-N trust model.

This opens up a lot of options for how we store history. A natural choice is a network where each node stores only a small fraction of the data. This is how torrent networks have worked for decades: while the network stores and distributes millions of files in total, each participant stores and distributes only a few of them. Perhaps counterintuitively, this approach doesn't even necessarily reduce the robustness of the data. If by reducing the cost of running nodes we can achieve a network of 100,000 nodes where each node stores a random 10% of the history, then each piece of data will be replicated 10,000 times - exactly the same replication factor as a 10,000 node network where each node stores everything.

Today, Ethereum has begun to move away from a model where all nodes store all history permanently. Consensus blocks (i.e. the part related to proof-of-stake consensus) are only stored for about 6 months. Blobs are only stored for about 18 days. EIP-4444 aims to introduce a one-year storage period for historical blocks and receipts. The long-term goal is to have a coordinated period (probably about 18 days) during which each node is responsible for storing everything, and then have a peer-to-peer network of Ethereum nodes store old data in a distributed manner.

Erasure coding can be used to improve robustness while keeping the replication factor unchanged. In fact, to support data availability sampling, blobs already use erasure coding. The simplest solution may be to reuse this erasure coding and put the execution and consensus block data into the blob as well.

EIP-4444: https://eips.ethereum.org/EIPS/eip-4444

https://ethresear.ch/t/torrents-and-eip-4444/19788

Portal Networking: Portal Network and EIP-4444: Distributed storage and retrieval of SSZ objects in Portal: href="https://ethresear.ch/t/distributed-storage-and-cryptographically-secured-retrieval-of-ssz-objects-for-portal-network/19575" _src="https://ethresear.ch/t/distributed-storage-and-cryptographically-secured-retrieval-of-ssz-objects-for-portal-network/19575">https://ethres ear.ch/t/distributed-storage-and-cryptographically-secured-retrieval-of-ssz-objects-for-portal-network/19575

How to raise the gas limit (paradigm): https://www.paradigm.xyz/2024/05/how-to-raise-the-gas-limit-2

The main remaining work involves building and integrating a concrete distributed solution for storing history — at least execution history, but eventually consensus and blobs as well. The simplest solutions are (i) simply bringing in an existing torrent library, and (ii) an Ethereum-native solution called the Portal network. Once either of these are brought in, we can enable EIP-4444. EIP-4444 itself does not require a hard fork, but it does require a new network protocol version. It is therefore valuable to enable it for all clients at once, because otherwise clients might fail to connect to other nodes that expect to download the full history but don’t.

The main trade-off involves how hard we try to make the “past” history available. The simplest solution is to stop storing past history tomorrow, and rely on existing archive nodes and various centralized providers to replicate it. This is easy, but it weakens Ethereum’s position as a permanent record of data. The harder but safer approach is to first build and integrate a torrent network to store history in a distributed way. There are two dimensions of “how hard we try” here:

How hard do we try to ensure that the maximum number of nodes actually store all the data?

How deeply do we integrate history storage into the protocol?

For (1), the most rigorous approach would involve proof of custody: effectively requiring each proof-of-stake validator to store a certain percentage of the history, and periodically cryptographically checking that they do so. A more modest approach would be to set a voluntary standard for the percentage of history stored by each client.

For (2), the basic implementation involves only what is already done today: Portal already stores an ERA file containing the entire Ethereum history. A more thorough implementation would involve actually connecting this to the sync process, so that if someone wants to sync a full history storage node or archive node, they can do so by syncing directly from the Portal network, even if no other archive nodes are online.

If we want to make it extremely simple to run or spin up a node, then reducing the history storage requirement is arguably more important than statelessness: of the 1.1 TB a node requires, about 300 GB is state, and the remaining about 800 GB is history. The vision of an Ethereum node running on a smartwatch and taking only minutes to set up is only possible if both statelessness and EIP-4444 are implemented.

Limiting history storage also makes it more feasible for newer Ethereum node implementations to only support the latest version of the protocol, which makes them much simpler. For example, since the empty storage slots created during the 2016 DoS attack have all been deleted, many lines of code can be safely deleted. Now that the switch to proof-of-stake is history, clients can safely delete all proof-of-work related code.

Even if we eliminate the need for clients to store history, the storage requirements of clients will continue to grow, about 50 GB per year, as the state continues to grow: account balances and nonces, contract code, and contract storage. Users can pay a one-time fee to burden current and future Ethereum clients forever.

State is harder to "expire" than history because the EVM is designed with the fundamental assumption that once a state object is created, it will exist forever and can be read by any transaction at any time. If we introduce statelessness, some argue that this problem might not be so bad: only a specialized class of block builders would need to actually store state, and all other nodes (even list generation!) could run statelessly. However, some argue that we don’t want to rely too heavily on statelessness, and eventually we might want state to expire to keep Ethereum decentralized.

Today, when you create a new state object (which can be done in one of three ways: (i) sending ETH to a new account, (ii) creating a new account with code, (iii) setting up a previously untouched storage slot), that state object will stay in that state forever. Instead, what we want is for objects to automatically expire over time. The key challenge is to do this in a way that achieves three goals:

Efficiency: It doesn’t require a lot of extra computation to run the expiration process.

User-Friendliness:If someone goes into a cave and comes back five years later, they shouldn’t lose access to their ETH, ERC20, NFT, CDP position…

Developer-Friendliness:Developers shouldn’t have to switch to completely alien mental models. Also, applications that are ossified today and don’t update should continue to function reasonably.

The problem is easy to solve without meeting these goals. For example, you could have each state object also store a counter to record its expiration date (which can be extended by burning ETH, which can happen automatically on reads or writes), and have a process that loops through the state to remove expired state objects. However, this introduces additional computation (and even storage requirements), and certainly doesn’t meet the user-friendliness requirement. It’s also hard for developers to reason about corner cases involving stored values sometimes resetting to zero. If you make the expiration timer contract-wide, this makes the developer’s job easier technically, but makes it harder economically: developers have to think about how to “pass on” the ongoing storage costs to their users.

These are all problems that the Ethereum core development community has been grappling with for years, with proposals like “blockchain rent” and “resurrection.” Ultimately, we combined the best parts of the proposals and converged on two categories of “least bad known solutions”:

Partial state expiration solutions.

Address-cycle-based state expiration proposals.

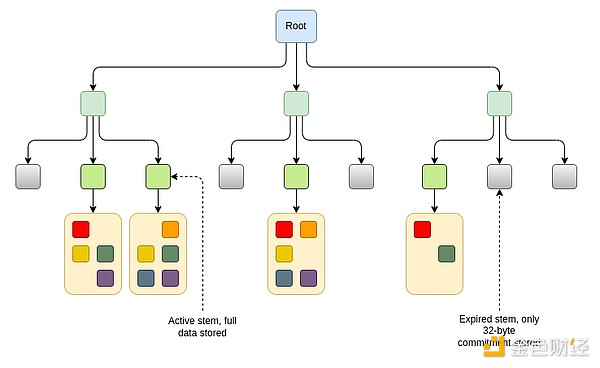

The partial state expiration proposals all follow the same principle. We split the state into chunks. Each one permanently stores a “top-level mapping” of which chunks are empty or non-empty. The data in each chunk is only stored if it has been recently accessed. There is a “resurrection” mechanism whereby if a chunk is no longer stored, anyone can recover that data by providing proof of what the data was.

The main differences between these proposals are: (i) how do we define “recently,” and (ii) how do we define “chunk”? One specific proposal is EIP-7736, which builds on the "stem-and-leaf" design introduced for Verkle trees (although compatible with any form of stateless tree, such as binary trees). In this design, headers, code, and storage slots are stored next to each other under the same "stem". The data stored under the stem can be up to 256 * 31 = 7,936 bytes. In many cases, the entire header and code of an account, as well as many key storage slots, will be stored under the same trunk. If the data under a given trunk has not been read or written for 6 months, the data is no longer stored, and only a 32-byte commitment to the data (a "stub") is stored. Future transactions accessing that data will need to "resurrect" the data and provide a proof that checks against the stub.

There are other ways to implement similar ideas. For example, if account spacing is not enough, we can make a scheme where each 1/232 part of the tree is controlled by a similar stem-and-leaf mechanism.

This is trickier because of incentives: an attacker could force clients to store large amounts of state permanently by putting large amounts of data into a single subtree and sending a single transaction to "update the tree" every year. If you make the update cost proportional to the tree size (or the update duration inversely proportional to the tree size), then someone could potentially harm another user by putting large amounts of data into the same subtree as them. One could try to limit both of these issues by making the account spacing dynamic based on the subtree size: for example, each consecutive 2^16 = 65536 state objects could be considered a "group". However, these ideas are more complex; the stem-based approach is simple and can align incentives because typically all the data under a stem is related to the same application or user.

What if we want to completely avoid any permanent state growth, even with a 32-byte stub? This is a hard problem: what if a state object is deleted, and a later EVM execution puts another state object in the exact same place, but then someone who cares about the original state object comes back and tries to recover it? For partial state expiration, the "stub" prevents new data from being created. For full state expiration, we can't even store a stub.

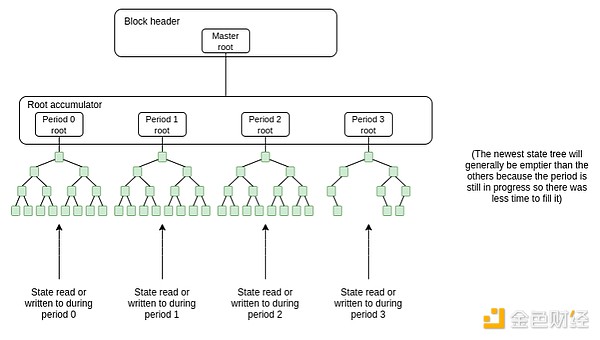

The address-cycle-based design is the best way to solve this problem. Instead of having one state tree store the entire state, we have a growing list of state trees, and any state read or written is saved to the most recent state tree. A new empty state tree is added once per cycle (think: 1 year). Older state trees are fixed. Full nodes only need to store the two most recent trees. If a state object has not been touched in two cycles and thus falls into the expired tree, it can still be read or written, but transactions need to prove a Merkle proof for it - once this is done, a copy is saved in the most recent tree again.

A key idea that makes this all user- and developer-friendly is the concept of address cycles. An address cycle is a number that is part of an address. A key rule is that addresses with address period N can only be read from or written to during or after period N (i.e. when the state trie list reaches length N). If you want to save a new state object (e.g. a new contract or a new ERC20 balance), if you make sure you put the state object into a contract with address period N or N-1, then you can save it immediately without proof that nothing was there before. On the other hand, any additions or edits to state in an old address period require proof.

This design preserves most of Ethereum's current properties, requires very little extra computation, allows applications to be written almost as they are today (ERC20s would need to be rewritten to ensure that balances for addresses with address period N are stored in a child contract that itself has address period N), and solves the "users in caves for five years" problem. However, it has one big problem: addresses need to be expanded to more than 20 bytes to fit in an address period.

One proposal is to introduce a new 32-byte address format that includes a version number, an address period number, and an extended hash.

0x01000000000157aE408398dF7E5f4552091A69125d5dFcb7B8C2659029395bdF

Red is the version number. The four zeros in orange here represent blanks to accommodate shard numbers in the future. Green is the address period number. Blue is the 26-byte hash.

The key challenge here is backward compatibility. Existing contracts are designed around 20-byte addresses and often use tight byte packing techniques that explicitly assume addresses are exactly 20 bytes long. One idea to solve this problem is to use a transition graph, where old-style contracts interacting with new-style addresses will see the 20-byte hash of the new-style address. However, making this secure requires considerable effort.

Another approach does the opposite: we immediately ban some subrange of addresses of size 2128 (e.g. all addresses starting with 0xffffffff), and then use that range to introduce addresses with address cycles and 14-byte hashes.

0xffffffff000169125d5dFcb7B8C2659029395bdF

The key sacrifice made by this approach is that it introduces a security risk for counterfactual addresses: addresses that hold assets or permissions but whose code has not yet been published to the chain. The risk involves someone creating an address that claims to hold a piece of (yet unpublished) code, but there is another valid piece of code that hashes to the same address. Computing such a collision would require 280 hashes today; address space contraction would reduce that number to a very accessible 256 hashes.

Key risk area, counterfactual addresses for wallets that are not held by a single owner, is a relatively rare situation today, but is likely to become more common as we move into a multi-L2 world. The only solution is to simply accept this risk, but identify all the common use cases where it could go wrong, and come up with efficient workarounds.

Early proposal

Blockchain rental fee: https://github.com/ethereum/EIPs/issues/35

Ethereum state size management theory: https://hackmd.io/@vbuterin/state_size_management

Several possible paths of statelessness and state expiration: https://hackmd.io/@vbuterin/state_expiry_paths

Partial State Expiration Proposal

EIP-7736: https://eips.ethereum.org/EIPS/eip-7736

Address Space Extension Document

Original Proposal: href="https://ethereum-magicians.org/t/increasing-address-size-from-20-to-32-bytes/5485" _src="https://ethereum-magicians.org/t/increasing-address-size-from-20-to-32-bytes/5485">https://ethereum-magicians.org/t/increasing-address-size-from-20-to-32- bytes/5485

Ipsilon comment: https://notes.ethereum.org/@ipsilon/address-space-extension-exploration

Blog post comments: https://medium.com/@chaisomsri96/statelessness-series-part2-ase-address-space-extension-60626544b8e6

An important point is that whether or not a state expiration scheme that relies on address format changes is implemented, the hard problem of address space expansion and contraction must be solved eventually. Today, it takes about 2^80 hashes to produce an address collision, and this computational load is already feasible for extremely resourceful actors: a GPU can do about 2^27 hashes, so it can calculate 2^52 in a year of operation, so all ~2^30 GPUs in the world can calculate a collision in about 1/4 of a year, and FPGAs and ASICs can accelerate this process further. In the future, such attacks will be open to more and more people. So the actual cost of implementing full state expiration may not be as high as it seems, because we have to solve this very challenging address problem anyway.

Enforcing state expiration may make it easier to transition from one state tree format to another, because no conversion process is required: you can simply start making a new tree with the new format, and then do a hard fork later to convert the old tree. So while state expiration is complex, it does help simplify other aspects of the roadmap.

One of the key prerequisites for security, accessibility, and trusted neutrality is simplicity. If the protocol is beautiful and simple, the likelihood of bugs is reduced. It increases the chances that new developers will be able to come in and use any part of it. It is more likely to be fair, and easier to defend against special interests. Unfortunately, protocols, like any social system, by default become more complex over time. If we don’t want Ethereum to fall into a black hole of increasing complexity, we need to do one of two things: (i) stop making changes and ossifying the protocol, or (ii) be able to actually remove features and reduce complexity. A middle path, where fewer changes are made to the protocol while removing at least a little complexity over time, is also possible. This section discusses how to reduce or eliminate complexity.

There is no big single fix that will reduce protocol complexity; the nature of the problem is that there are many small fixes.

An example that has been largely completed and can serve as a blueprint for how to deal with other issues is removing the SELFDESTRUCT opcode. The SELFDESTRUCT opcode was the only opcode that could modify an unlimited number of storage slots within a single block, requiring greater complexity in client implementations to avoid DoS attacks. The original purpose of this opcode was to enable voluntary state clearing, allowing the state size to decrease over time. In practice, few people ended up using it. The opcode was weakened to only allow self-destructing accounts created in the same transaction as in the Dencun hard fork. This resolved the DoS issue and allowed for significant simplification of client code. In the future, it may make sense to eventually remove this opcode entirely.

Some key examples of protocol simplification opportunities that have been identified to date include the following. First, some examples outside of the EVM; these are relatively non-invasive and therefore easier to reach consensus on and implement in a shorter time.

RLP → SSZ Conversion:Originally, Ethereum objects were serialized using an encoding called RLP. Today, the beacon chain uses SSZ, which is significantly better in many ways, including supporting not only serialization but also hashing. Eventually, we hope to get rid of RLP completely and move all data types to SSZ structures, which in turn will make upgrades much easier. Current proposed EIPs for this include [1] [2] [3].

Removing Legacy Transaction Types:There are too many transaction types today, and many of them could be removed. A more modest alternative to removing them altogether is an account abstraction feature, through which Smart Accounts could include code to handle and validate legacy transactions if they so choose.

LOG Reform:The log creates bloom filters and other logic, adding complexity to the protocol, but is too slow for clients to actually use it. We can remove these features and instead invest our efforts into alternatives such as out-of-protocol decentralized log reading tools using modern techniques like SNARKs.

Finally remove the beacon chain sync committee mechanism:The sync committee mechanism was originally introduced to enable light client verification for Ethereum. However, it adds complexity to the protocol. Eventually, we will be able to verify the Ethereum consensus layer directly using SNARKs, which will remove the need for a dedicated light client verification protocol. By creating a more "native" light client protocol that involves verifying signatures from a random subset of Ethereum consensus validators.

Data format coordination:Today, execution state is stored in Merkle Patricia trees, consensus state is stored in SSZ trees, and blobs are committed in the form of KZG commitments. In the future, it makes sense to create a single unified format for block data and state. These formats will cover all important requirements: (i) simple proofs for stateless clients, (ii) serialization and erasure coding of data, and (iii) standardized data structures.

Removing Beacon Chain Committees:This mechanism was originally introduced to support a specific version of execution sharding. Instead, we ended up with sharding via L2 and blobs. As such, committees are unnecessary, and work is underway to remove them.

Removing Mixed Endianness:The EVM is big endian, and the consensus layer is little endian. It might make sense to reconcile and make everything big endian (probably big endian because the EVM is harder to change).

Now, some examples from the EVM internals:

Simplifying the gas mechanism:The current gas rules are not well optimized to clearly limit the amount of resources required to validate a block. Key examples of this include (i) storage read/write costs, which are intended to limit the number of reads/writes in a block, but are currently quite arbitrary, and (ii) memory padding rules, where it is currently difficult to estimate the maximum memory consumption of the EVM. Proposed fixes include stateless gas cost changes, which reconcile all storage-related costs into a simple formula, and memory pricing proposals.

Remove precompiles:Many of the precompiles Ethereum has today are both unnecessarily complex and relatively unused, and account for a large proportion of consensus failure near misses, but are not actually used by any applications. Two ways to deal with this are (i) to remove the precompile outright, and (ii) to replace it with (inevitably more expensive) EVM code that implements the same logic. This draft EIP proposes to do this first for identity precompiles; later, RIPEMD160, MODEXP, and BLAKE may be removed.

Remove gas observability:Make it so that an EVM execution can no longer see how much gas it has left. This will break some applications (most notably sponsored transactions), but will make it easier to upgrade in the future (e.g. to more advanced multi-dimensional gas versions). The EOF spec already makes gas unobservable, but to aid protocol simplification, EOF needs to be mandatory.

Improvements in static analysis: Today's EVM code is difficult to statically analyze, especially because jumps can be dynamic. This also makes it harder to make optimized EVM implementations (pre-compile EVM code into other languages). We can fix this by removing dynamic jumps (or making them more expensive, e.g. gas cost is linear in the total number of JUMPDESTs in the contract). EOF does this, but getting the protocol simplification gains from it requires making EOF mandatory.

Purge 的后续步骤:https://notes.ethereum.org/I_AIhySJTTCYau_adoy2TA

SELFDESTRUCT:https://hackmd.io/@vbuterin/selfdestruct

SSZ-ification EIPS:[1] [2] [3]

https://eips.ethereum.org/EIPS/eip-4762

Linear memory pricing: https://notes.ethereum.org/ljPtSqBgR2KNssu0YuRwXw

Precompile removals: https://eips.ethereum.org/EIPS/eip-4762

Linear memory pricing: https://notes.ethereum.org/ljPtSqBgR2KNssu0YuRwXw

Bloom filter removal: A method for off-chain secure log retrieval using incremental verifiable computation (read: recursive STARKs): href="https://notes.ethereum.org/XZuqy8ZnT3KeG1PkZpeFXw" _src="https://notes.ethereum.org/XZuqy8ZnT3KeG1PkZpeFXw">https://notes.ethereum.org/XZuqy8ZnT3KeG1PkZpeFXw

The main tradeoffs in making this simplification of functionality are (i) how much and how fast we simplify vs. (ii) backward compatibility. The value of Ethereum as a chain is that it is a platform where you can deploy an application and be confident that it will still work years later. At the same time, it is possible to take this ideal too far and, in the words of William Jennings Bryan, “nail Ethereum to the cross of backward compatibility.” If there are only two applications in all of Ethereum that use a feature, and one of them has had no users for years, and the other has almost no use at all, and they have collectively gained $57 in value, then we should remove that feature, paying the victim $57 out of pocket if necessary.

The broader societal problem is to create a standardized pipeline for making non-urgent, backwards-compatibility-breaking changes. One way to approach this problem is to examine and extend existing precedents, such as the SELFDESTRUCT process. That pipeline would look something like this:

Step 1:Start a discussion about removing feature X.

Step 2:Conduct an analysis to determine how disruptive removing X would be to applications, and based on the results, either (i) abandon the idea, (ii) proceed as planned, or (iii) identify a modified “least disruptive” way to remove X and proceed.

Step 3: Create a formal EIP to deprecate X. Make sure popular high-level infrastructure (e.g. programming languages, wallets) respects this and stops using the feature.

Step 4: Finally, actually remove X.

There should be a years-long process between steps 1 and 4, with clear instructions on which projects are in which step. At this point, there is a trade-off between the force and speed of the feature removal process versus being more conservative and putting more resources into other areas of protocol development, but we are still far from the Pareto frontier.

The main set of changes proposed for the EVM is the EVM Object Format (EOF). EOF introduces a large number of changes, such as disabling gas observability, code observability (i.e. no CODECOPY), and only allowing static jumps. The goal is to allow for more upgrades to the EVM to have stronger properties while maintaining backward compatibility (since the pre-EOF EVM still exists).

The benefit of this is that it creates a natural path for adding new EVM features and encouraging migration to a stricter EVM with stronger guarantees. The downside is that it significantly increases the complexity of the protocol unless we can find a way to eventually deprecate and remove the old EVM. A major question is: What role does EOF play in the EVM simplification proposal, especially if the goal is to reduce the complexity of the entire EVM?

Many of the "Improvement" proposals in the rest of the roadmap are also opportunities to simplify older features. To repeat some of the examples above:

Switching to single-slot finality gives us the opportunity to remove committees, reformulate economics, and make other simplifications related to proof-of-stake.

Fully implementing account abstraction allows us to remove a lot of the existing transaction processing logic by moving it into a piece of "default account EVM code" that all EOAs can replace with it.

If we move the Ethereum state to a binary hash tree, this can be reconciled with a new version of the SSZ so that all Ethereum data structures can be hashed in the same way.

A more radical strategy for simplifying Ethereum is to keep the protocol as is, but turn large parts of the protocol from protocol functionality into contract code.

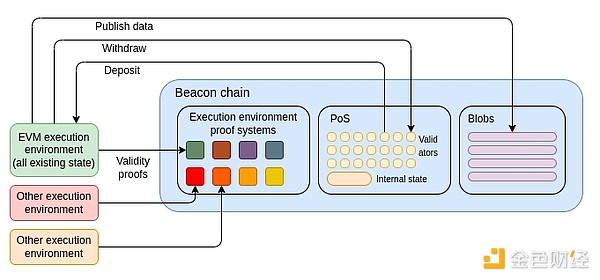

The most extreme version would be to have Ethereum L1 “technically” just the beacon chain, and introduce a minimal VM (e.g. RISC-V, Cairo, or a simpler VM specialized for proof systems) that allows anyone else to create their own rollups. The EVM would then become the first of these rollups. Ironically, this is exactly the same outcome as the 2019-20 execution environment proposal, although SNARKs make it more feasible to actually implement.

A more moderate approach would be to keep the relationship between the beacon chain and the current Ethereum execution environment unchanged, but do an in-place swap of the EVM. We could choose RISC-V, Cairo, or some other VM as the new "official Ethereum VM", and then force all EVM contracts to be converted to the new VM code (either by compilation or interpretation) that interprets the logic of the original code. In theory, this could even be done with the "target VM" as the EOF version.

Bitcoin has a block size limit of 1M transaction block + 3M signature block. There are size and opcode number restrictions for each transaction.

JinseFinance

JinseFinanceIf Trump is elected, J.D. Vance, who will turn 40 in August, will become one of the youngest vice presidents in U.S. history and has only two years of elected experience, but he is the most representative figure of "America First" populism.

JinseFinance

JinseFinanceJonathan Beale's The Block Size War tells the story from the perspective of those supporting small blocks; Roger Ver and Steve Patterson's Hijacking Bitcoin tells the story from the perspective of those supporting large blocks.

JinseFinance

JinseFinanceThis article is a micro-modeling of cyclical bubbles by Giovanni Santostasi based on the power-law growth model for the halving cycle of Bitcoin every four years.

JinseFinance

JinseFinanceThe market expects that Base chain is expected to take over the overflow funds from the Solana meme craze, and market participants are betting on the successful investment of Raydium by betting on Aerodrome, the leading Base chain DEX project. Let’s analyze the intrinsic value of Aerodrome together.

JinseFinance

JinseFinanceThere has been a lot of discussion recently about increasing the gas limit for Ethereum blocks.

JinseFinance

JinseFinance JinseFinance

JinseFinanceHe suggests that crypto's success has been largely caused by almost nonexistent interest rates, which pushed people toward speculation instead of 'real finance'.

Others

Othersnumb, completely numb

链向资讯

链向资讯Decentralized finance provides essential financial freedom tools for digital nomads in a world with strict boundaries.

Cointelegraph

Cointelegraph